The Fundamentals of VR Storytelling

SONA: Immersive Storytelling Festival - Schedule Page

Download the slides as a PDF and PowerPoint.

Let’s jump right in

Introductions

Why am I to talk to such a talented room of people anyway?

All of that just means: we’re hanging out at the intersection of art and technology. See you there later.

Let’s get into it!

This talk is a combination of a few lectures and other talks, so part 1 lost it’s narrative cohesion. Instead, it’s just some of the best points I couldn’t bring myself to delete.

Then part 2, the real talk, is how to do immersion.

So, in no particular order! Some background and notes on VR Storytelling.

We have to start somewhere, and that’s with definitions. Immersion!

Normally we break the term down: depth of immersion, fidelity of immersion, ease of immersion, and so on; but we’re a room of storytellers!

A definition is only as good as it helps us make decisions.

So our definition gets simpler: It’s storytelling!

But, because immersive media like VR is about our perception and senses, it’s the suspension of disbelief - just like you know and love - of our senses.

How can we get the user focused on your story, and not on all of this junk they have strapped to their face?

Here, I like to steal from the world of HCI and Interaction design, where the ‘don’t make me think’ principle stands tall.

We want to create worlds, a virtual reality, that is not fooling them into thinking it’s realistic, but is getting out of the way to let them directly engage, direcly react, and be receptive to our story.

One might think that engagement makes this easier! You’d be wrong.

We are asking for more of their senses, more of their time, more of their attention, more intimacy, more removal from their own world. VR asks a lot.

Like tv vs. movie vs. netflix reality junk show. VR is high on that spectrum of how much attention and focus the user has to give us.

This is a contract we have with our users.

When you ask for more, users expect something in return. In general, what they are asking for is immersiveness. Users want to let go into the story, and we want them to. - we have to fight reality, which is in the way.

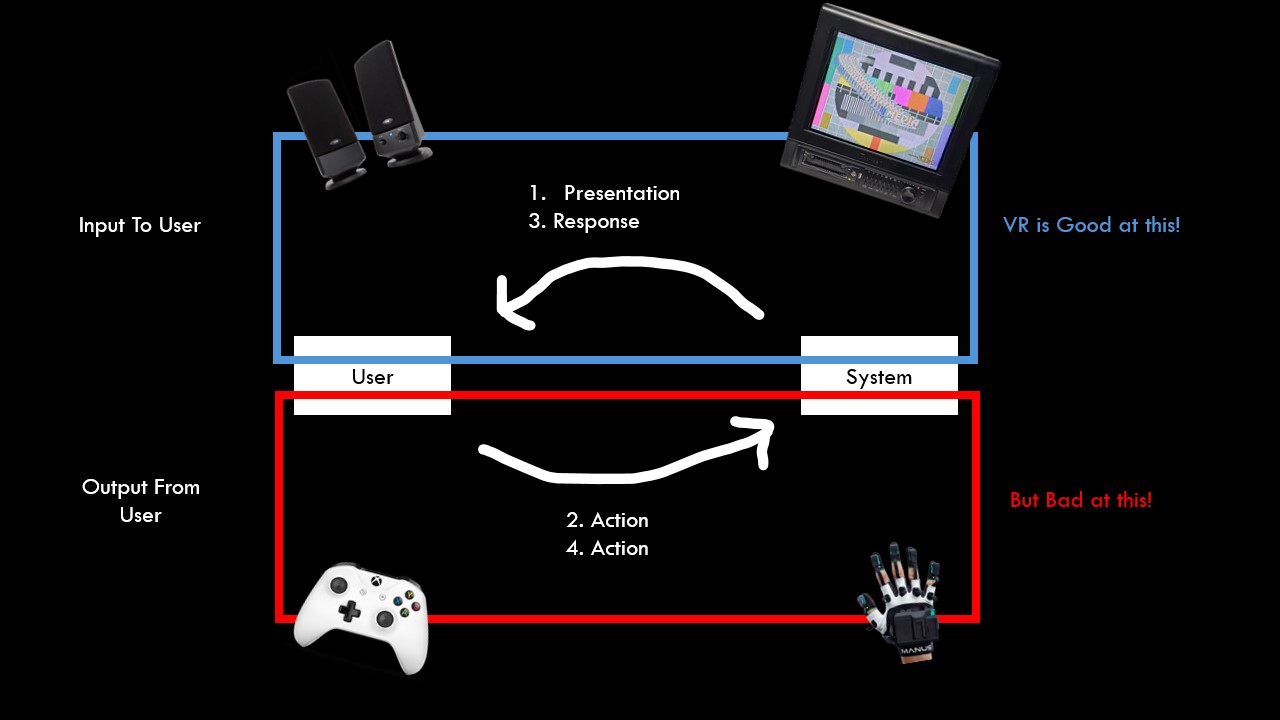

Strengths: Great Display

Weaknesses: Not great input

A good answer to “why vr” is “Because it feels cool!” That’s a perfectly valid answer!

VR is an INCREDIBLE display, it’s INCREDIBLE speakers… let’s lean into the strengths!

E.g. Don’t let locomotion be your central verb, if your experience isn’t about locomtion.

Big whale!

VR is good at scale! Whale Big!

theBlu by WEVR. Maybe the first VR experience a lot of us saw! It hits hard!

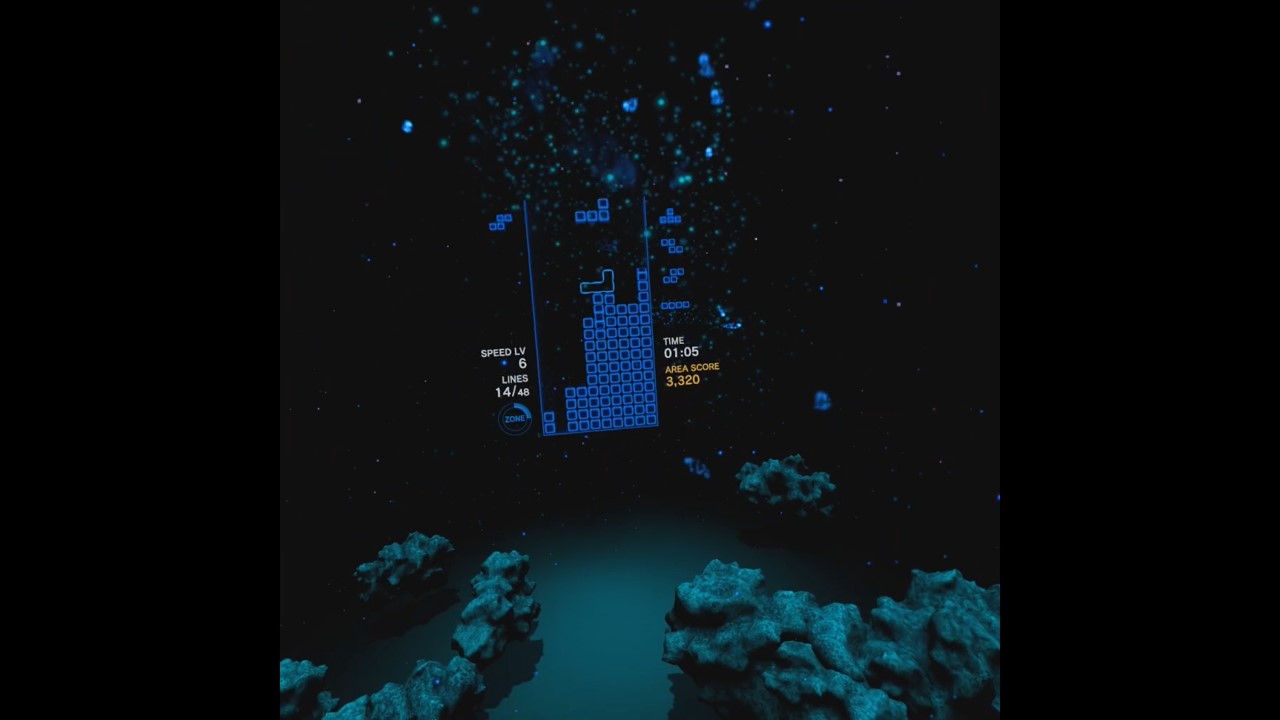

Tetris Effect.

It’s just tetris. and it’s INCREDIBLE. It’s so good!

Center? Just tetris. But the game is the best with your periphery. It’s all just so awesome being in these particle filled spaces and locking into a game of tetris. I’ve lost so many hours of my life to this game.

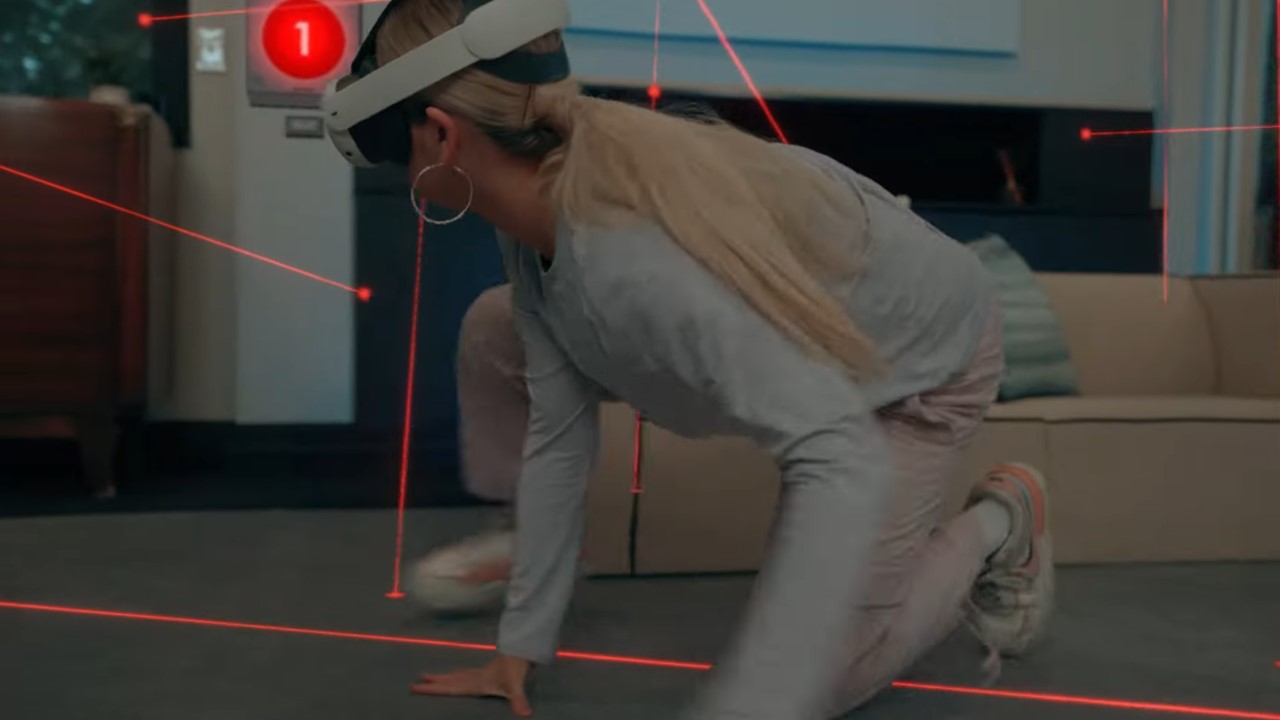

The exception proves the rule. If you are using proprioception, kinesthetics, embodiment… then go all in, and do it well! Like LaserDance, here, or Beat Saber.

(But this isn’t a game design talk so let’s move on)

I think we all understand we don’t have to do first person stories.

But, I’m giving a fundamentals talk, so it just has to be said. I don’t need to be inside of the face of a character.

You can just do fly on the wall. A history of cinema, theater, and everything else has proven that that’s fine! We can empathize with people we are looking at! It’s okay!

Consider Apple Vision Pro’s NBA coutside experience.

When I look down, I don’t see any legs! (sarcastic gasp)

It’s fine, I’m watching a basketball game! It’s fine that this is an impossible place to watch from, it’s a good place to watch a basketball game from.

(shout out all the apple vision pro users. There are 10s of us.)

Perception - What are the things our senses need to understand a space? If we can understand it instantly, reflexively… that’s (part of) immersiveness.

Immersiveness is going to come from designing effectively for our senses.

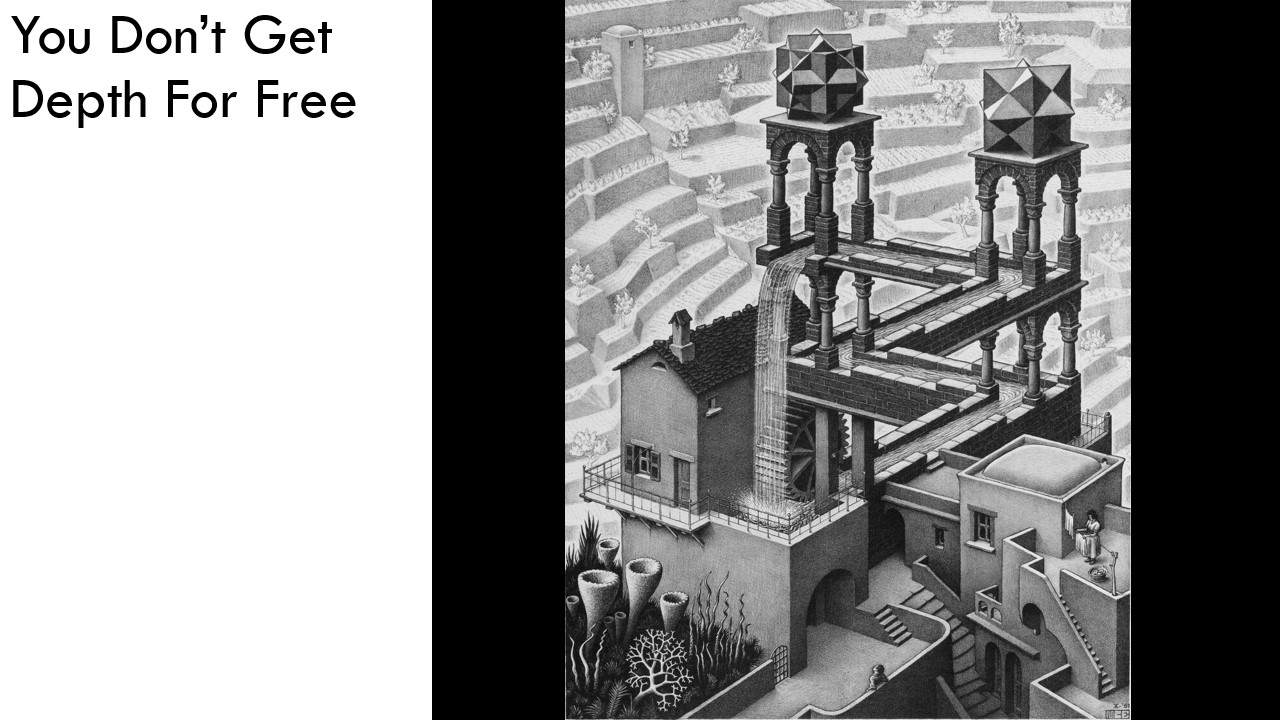

And you don’t get it for free!

I don’t care what 3D engine or hardware stack you’re using. We don’t get it for free!

Optical illusions are real, they often come from mismatched depth cues. Our job is to create the opposite of an optical illusion.

Create a world that is about what it is about, and is not just about the fact that it’s there at all.

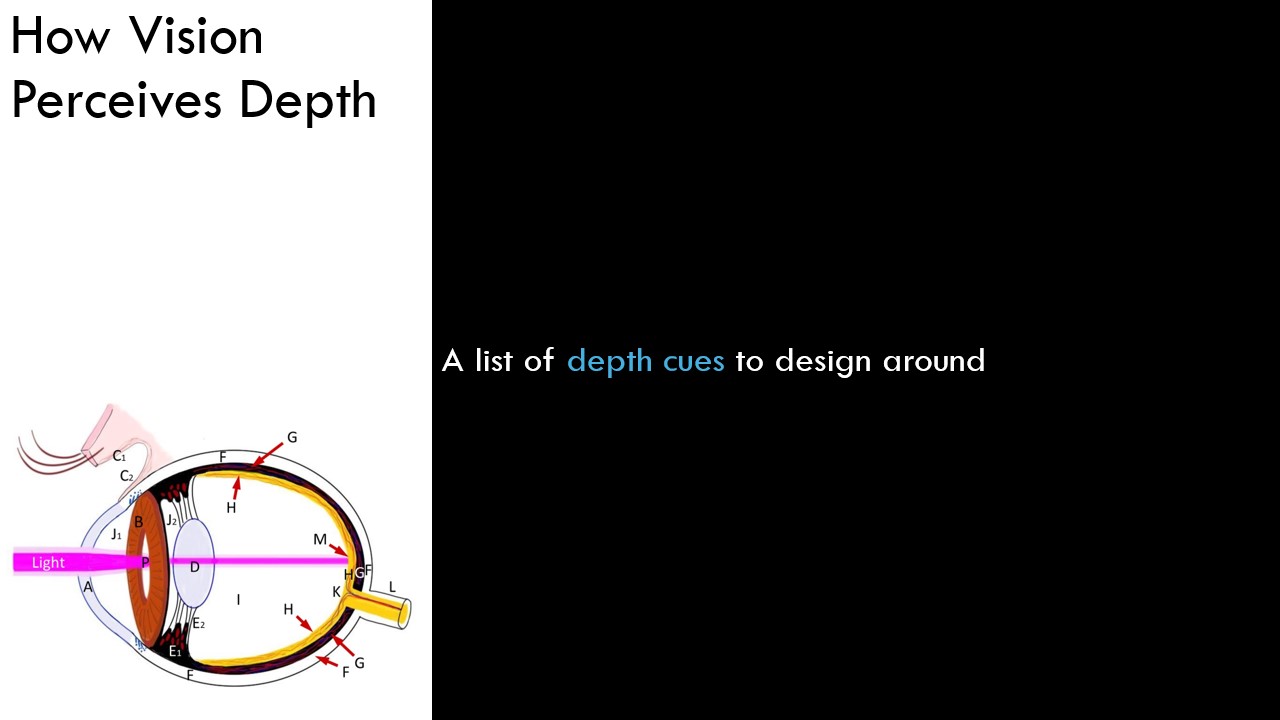

In vision, we can simplify how our eyes work down to one consideration: depth.

If we can detect depth, we can build up an understanding of our environment.

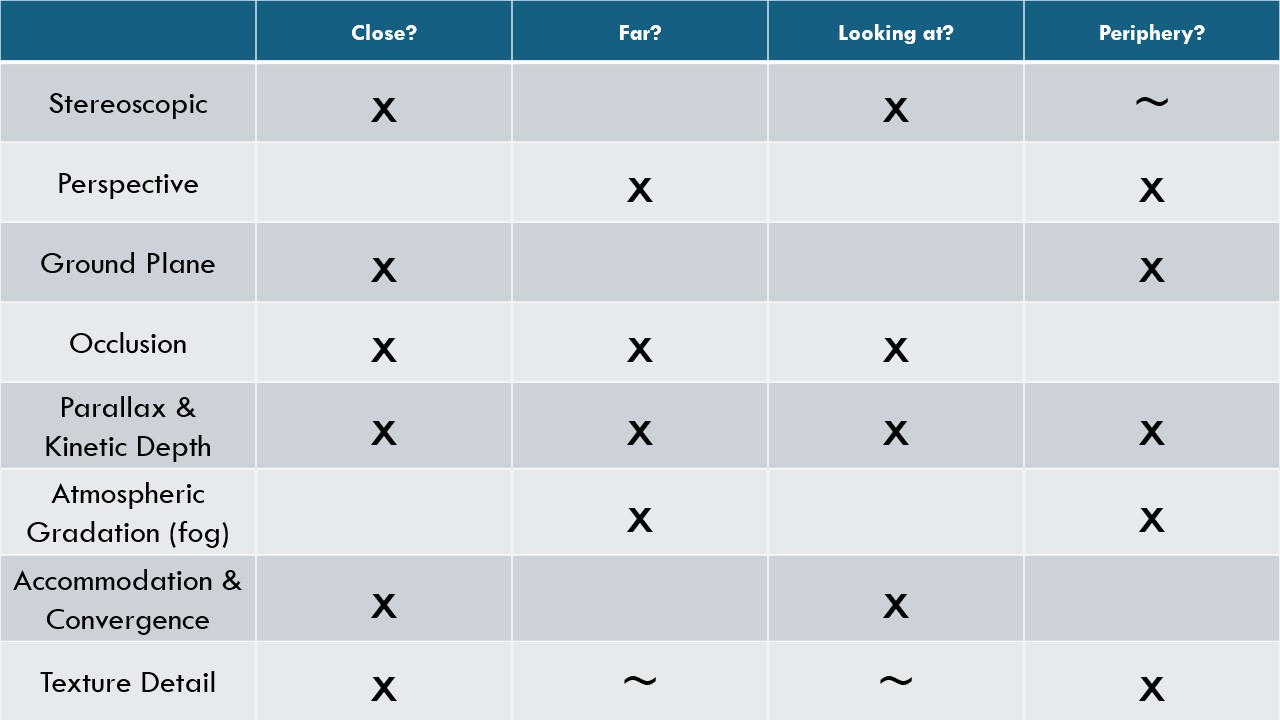

So let’s run through some depth cues we should pay attention to.

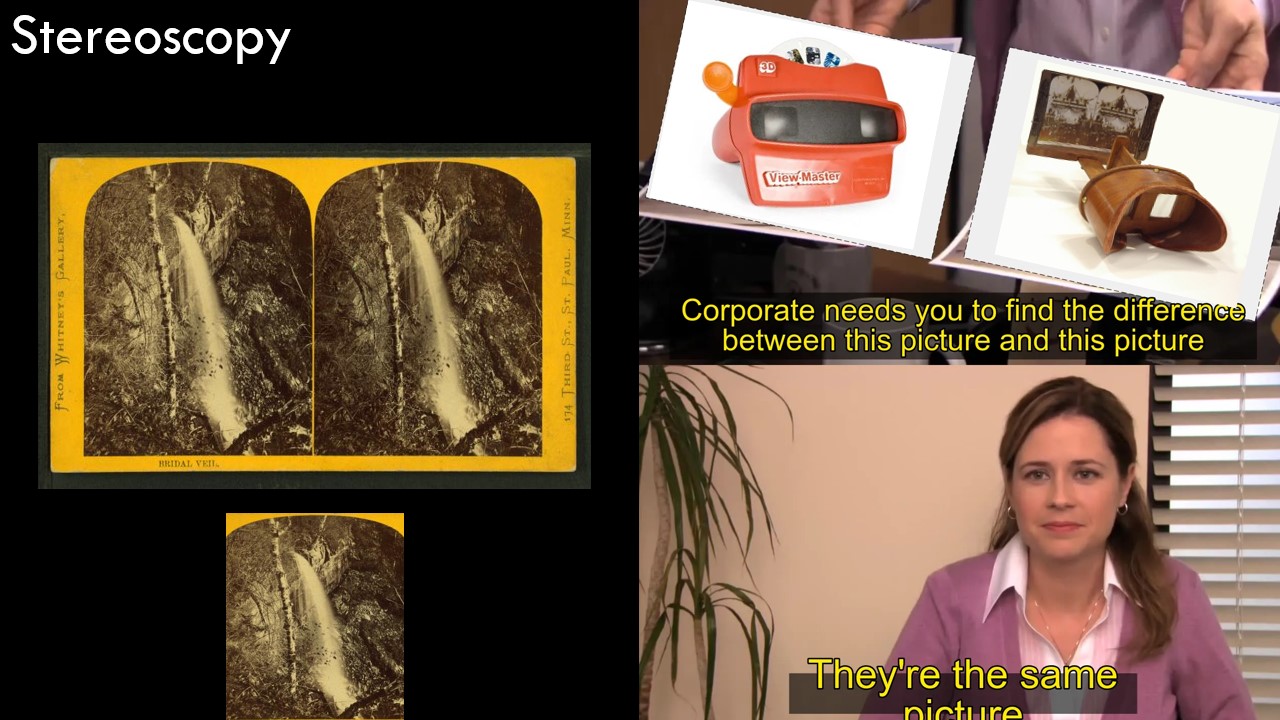

Let’s start easy: Stereoscopic. Left/right images.

We do get this one for free out of our rendering engine. But, it’s not very effective outside of 40-60 feet or so. It stops being useful for anything far away. The images from our eyes just aren’t that different.

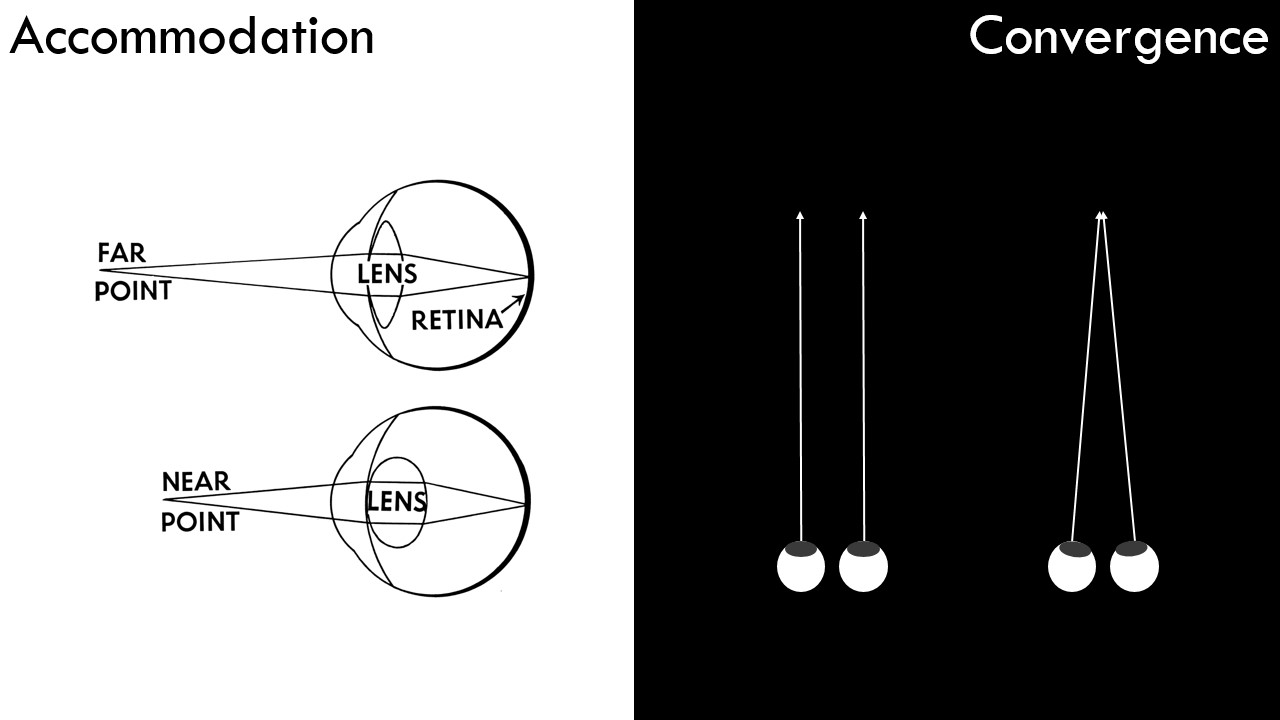

Another one we don’t have a lot of control over: the understanding of the muscles of our eyes.

We have a muscle that flexes the goopy gross lens sack of juice that changes focus, that’s accomodation.

And we have the ‘how cross-eyed are we’ muscles that control.

These cues work, of course, for what we are looking at. Not periphery. And they aren’t very effective for things far away.

(And VR hardware is bad at them in general)

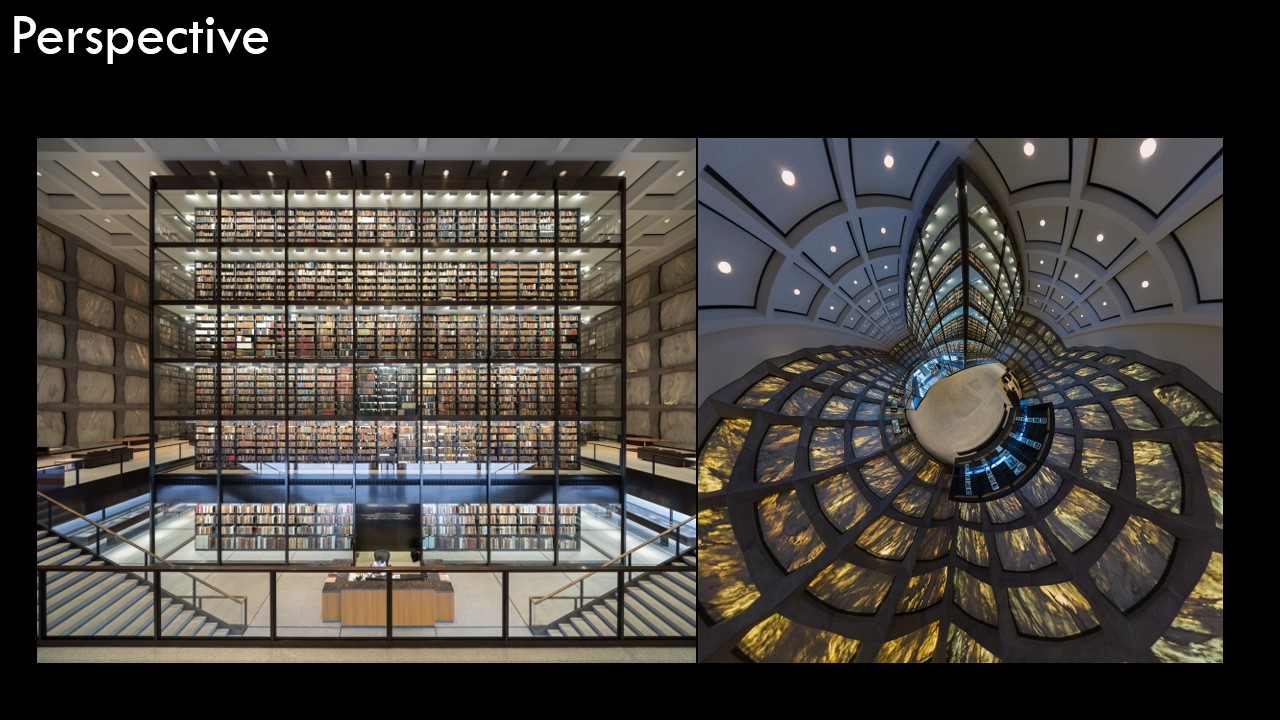

Things get bigger when they are closer to us. Incredible - another one for free out of the rendering engine.

But it doesn’t work if you put everything the same distance away! Or if all the walls have no textures or details! Or if there is nothing in the scene! Perspective needs things to work!

Stop putting me in the middle of radial symmetric room! I’m begging you! I know it makes sense when you’re dragging GameObjects into a scene, but you gotta stop it!

Here’s a photo I took at the Miller Gallery.

It makes me feel so uncomfortable! The mismatched, but separately strongly implied, perspectives. Geh.

So don’t do that. (It’s surprisngly easy to make things that feel this uncomfortable on accident)

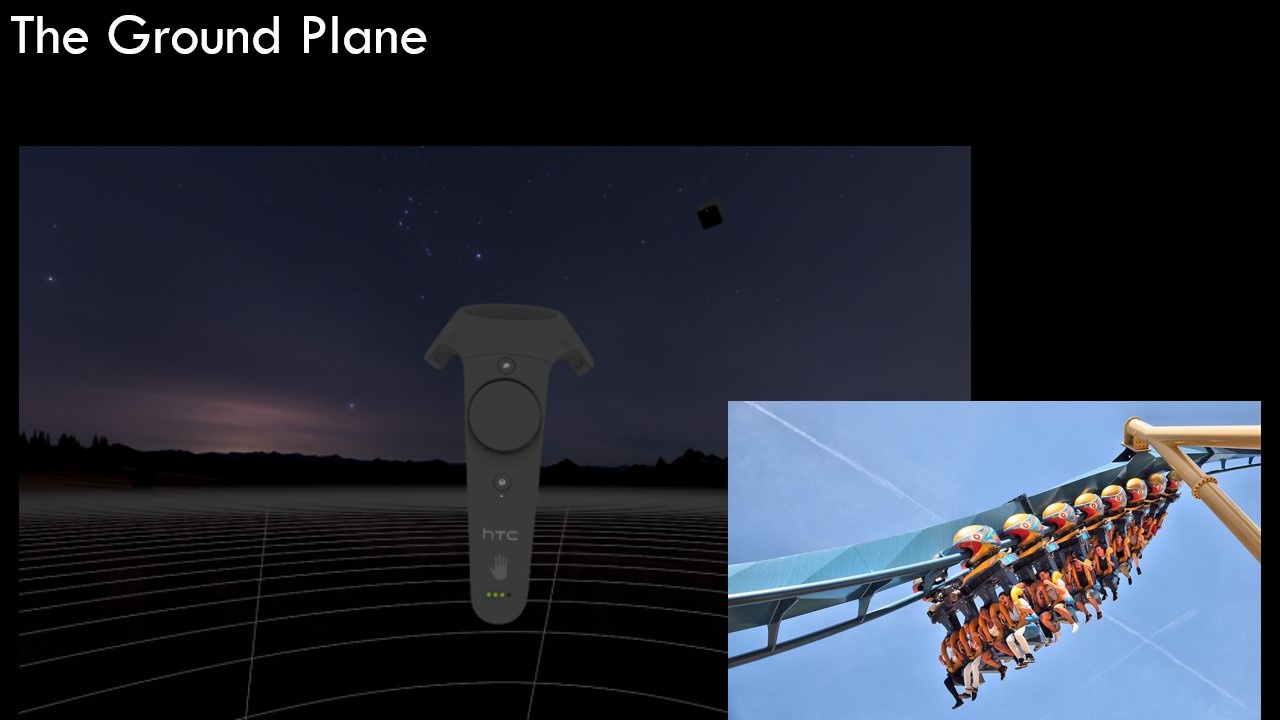

Ground plane! It’s so important!

You must, by default, put down a ground plane. I really need it to know how big I am. And if the groundplane is wrong, I just feel like I’m tying, not crouched.

This semester a student made a horror game with you wading through the swamp - and it didn’t work!

This is a fun rule to selectively break. Sliding the ground plane up and down is instant motion sickness.

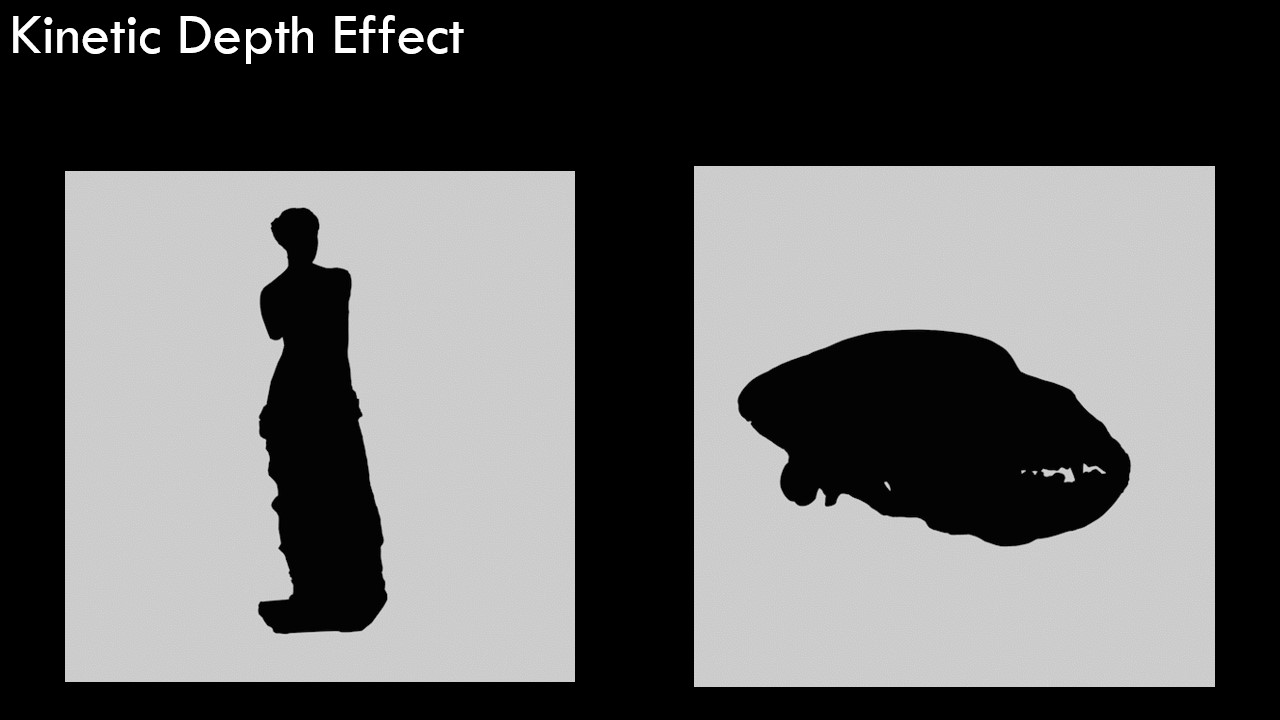

Our brains are good at object recognition, especially with strong silhouettes. (Always a good design principle)

And as those silhouettes deform, we can understand shape remarkably well!

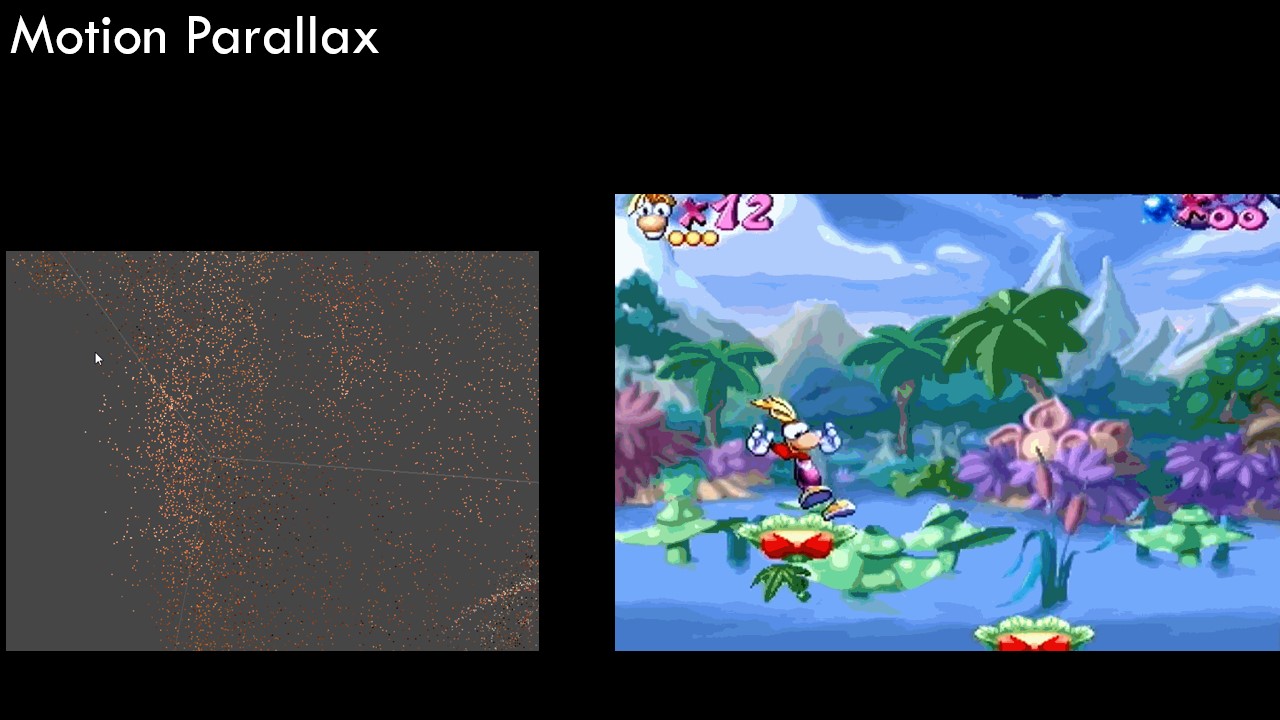

Motion Parallax, the most important one. This is one of the most poweful depth cues, and what really is special about 6DOF VR.

You have to encourage your users to move their heads. Even the smallest head movement communicates so much.

Look at this nonsense of noise - and for just a moment, it’s OBVIOUS that it’s a face/head. THen it’s back to gibberish. We’re so good at reverse engineering the motion of thousands of dots into a movement of our head.

This only works, if there is stuff in the scene, and things nearby!

Don’t have anything in your scene? Then just put dust motes in your scene!

Unmotivated! Doesn’t matter! Cover your scene in dust motes! Show me too much, I dare you!

You’re afraid of it getting noisy? Show me to much. It’s possible, but go find that line.

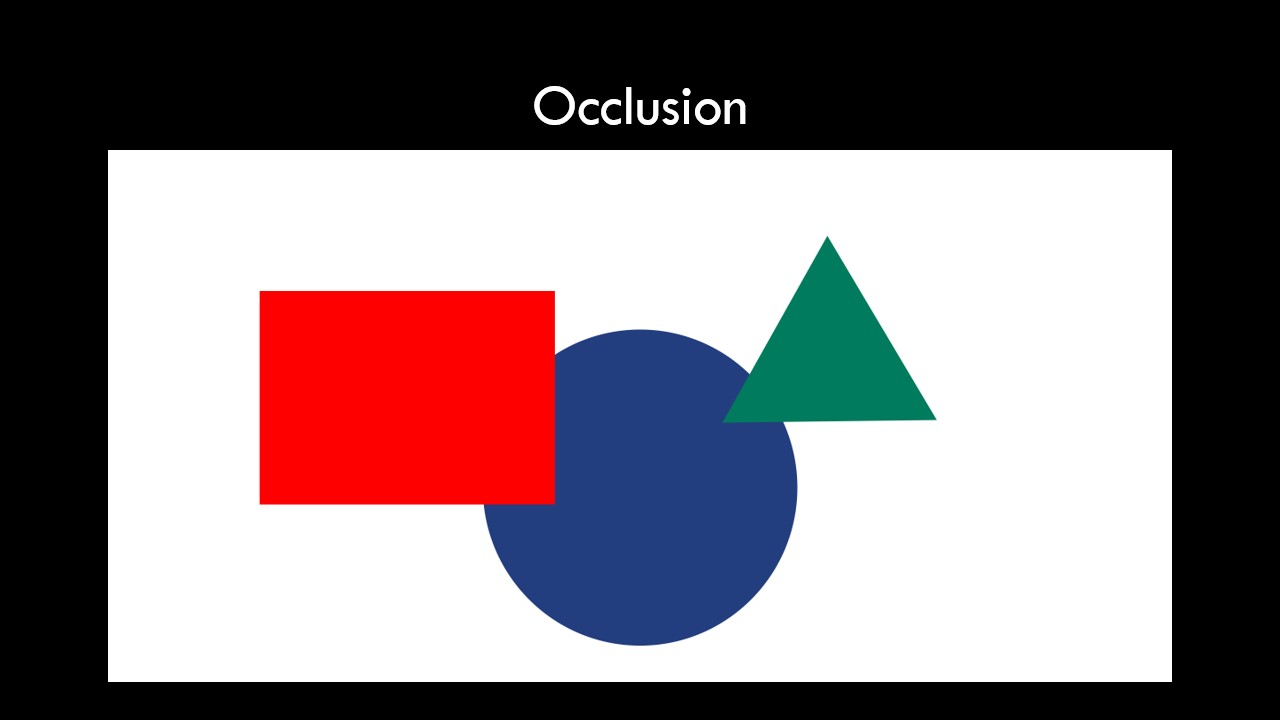

Related to motion parallax is occlusion. Pretty obvious, things that are closer block things that are further.

[flips back/forth with previous slide]

There’s also some ‘distance above horizon’ happening in this example.

Half-Lify Alyx never misses an opportunity to just put a bunch of junk in the foreground.

Pipes! Dangling wires! All the time, every scene - always at multiple layers; lots of windows, lot’s of foreground elements.

You get all these cues. Do it!

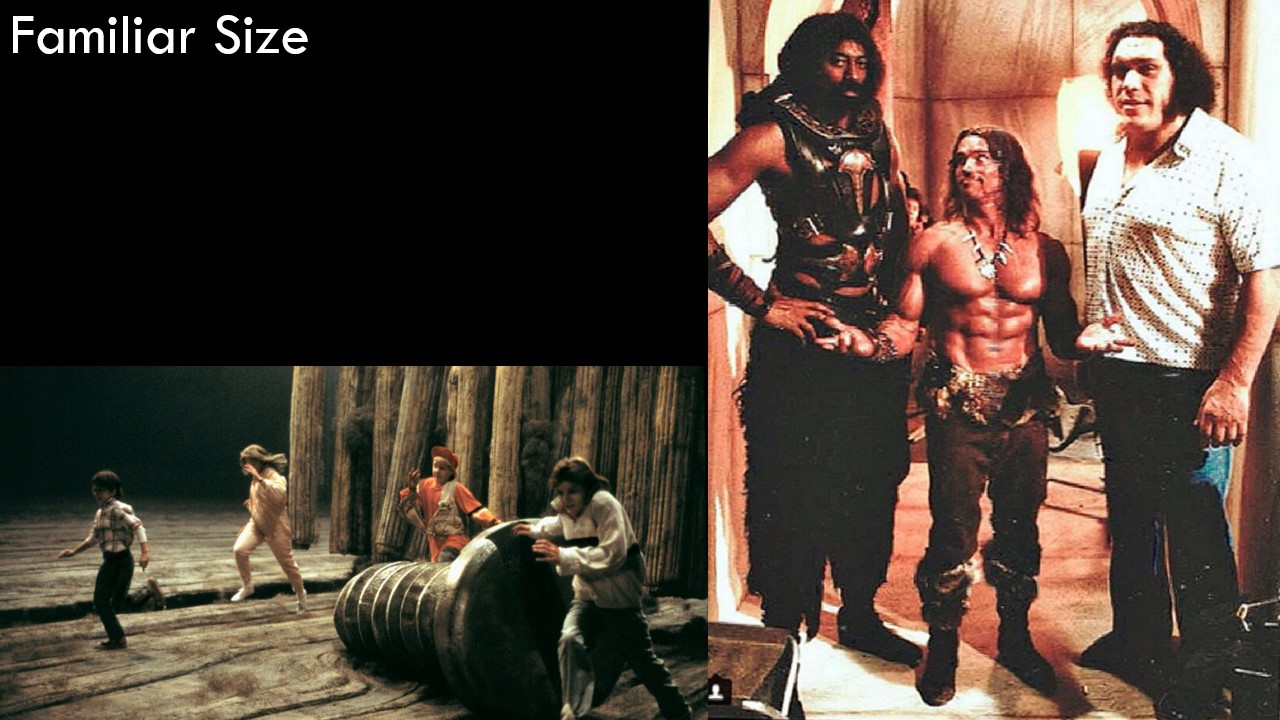

Put things that we have an intuitive understanding of the scale of in your scene.

Who is that in the middle?

This is Wilt Chamberlain, Arnold Schwarzenegger, and Andre the Giant on the set of Conan the Destroyer. Yeah!

We know how big humans are supposed to be. This “incorrectly” sized humans make us read arnie as small.

Honey I shrunk the kids: basically ‘familiar size’ with the prop department is it’s only trick to establish scale. It’s a good one!

If you have nothing familiar, create something familiar with our good friend: copy/paste.

Oh, I know how big this trash can is next to me, and there’s one over there. It’s small. I know how big that is!

SO yes: fill you scenes with trash… cans. and window sills and potted plants and railings.

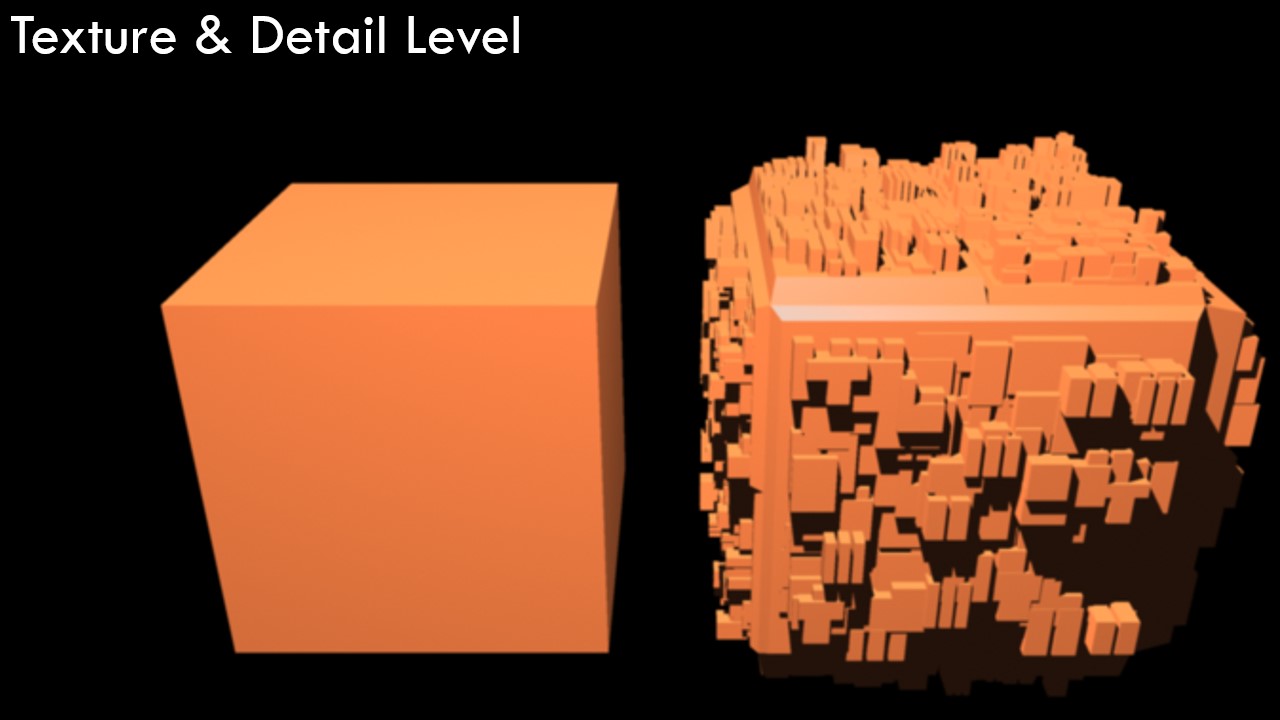

Texture. Another obvious one: our stuff needs texture for this to work.

Please stop greyboxing and using blank cell-shaded things. Do that, but just give it a little noise. Give it a wallpaper texture. a paint texture, some paintbrush strokes. Something!

Carpet! Tile floors!

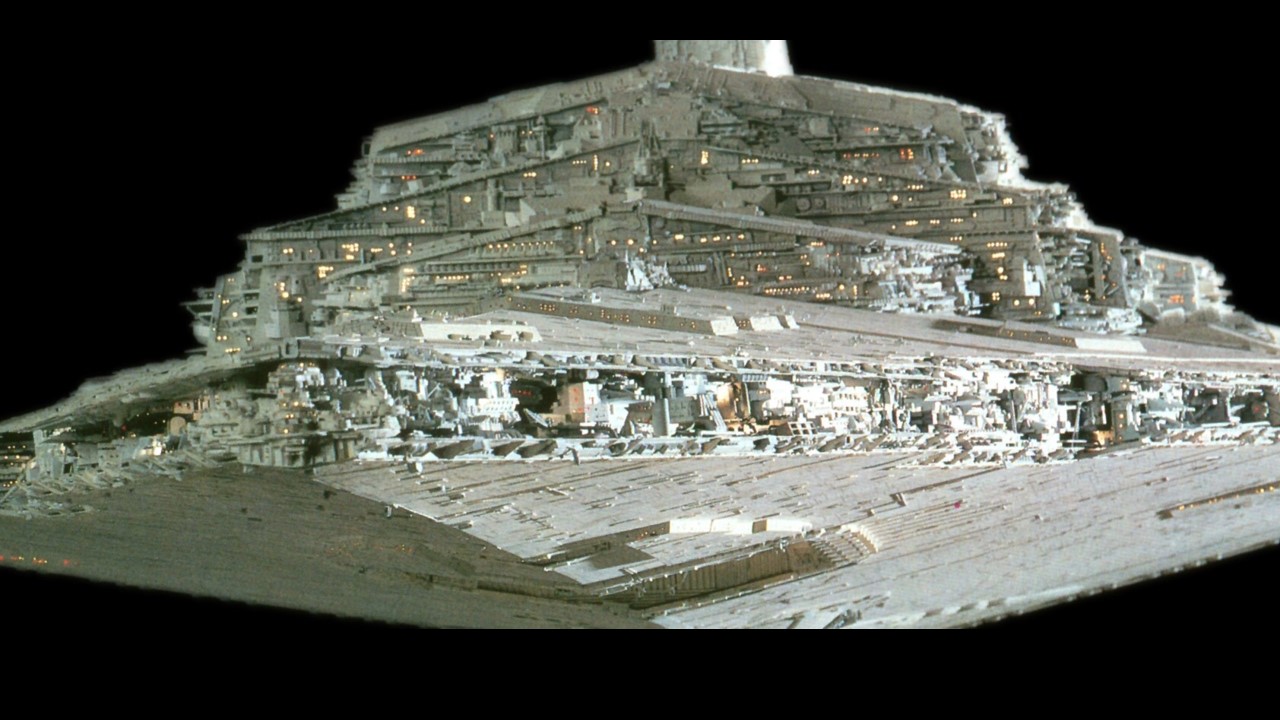

Greebles! Texture but little 3D bits. Greebles! Big things have small things on them.

You can’t just scale up your assets.

How big is this room?

No clue!

How about this room? I have a pretty good guess.

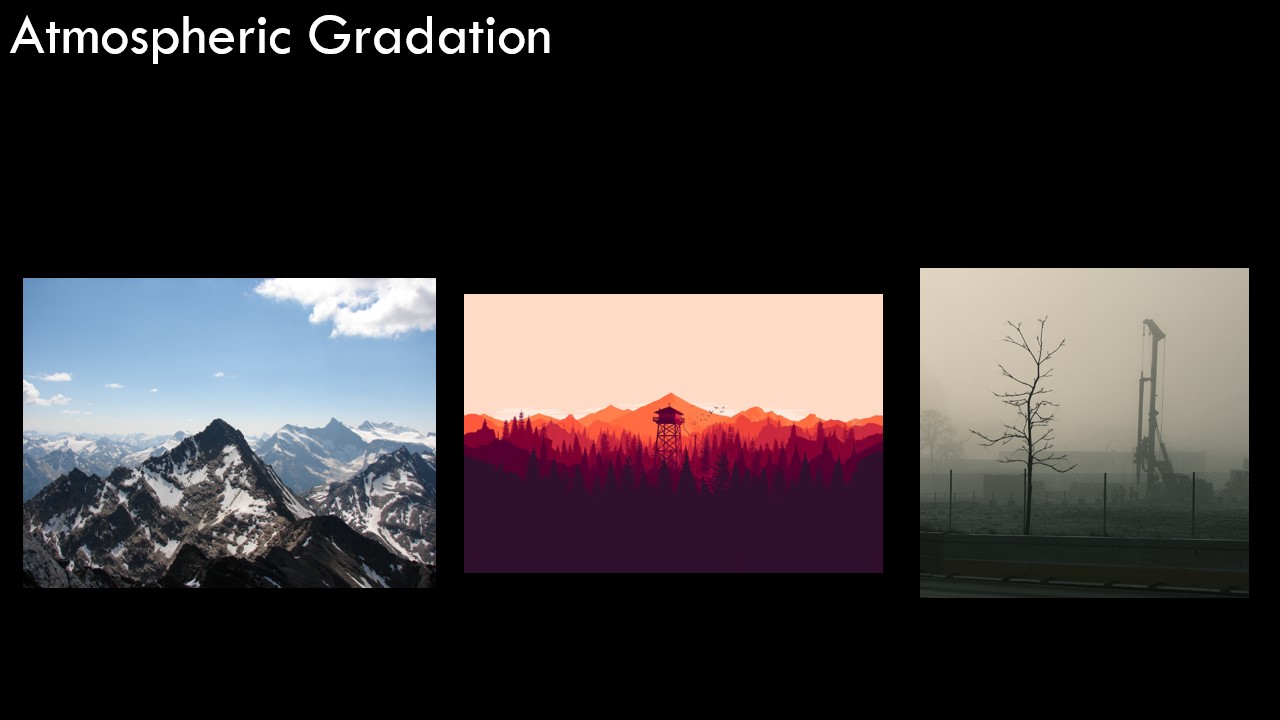

Click the fog checkbox.

Atomspheric gradation is great because it works at any distance. We don’t need fog in our scene, we can just lower the contrast of things that are far away.

Or you can use fog for up close things. Go crazy with fog!

All the atmospheric scattering! Heck yeah. The scene is basically just rendering the depth map!

Even in a void, you can have fog.

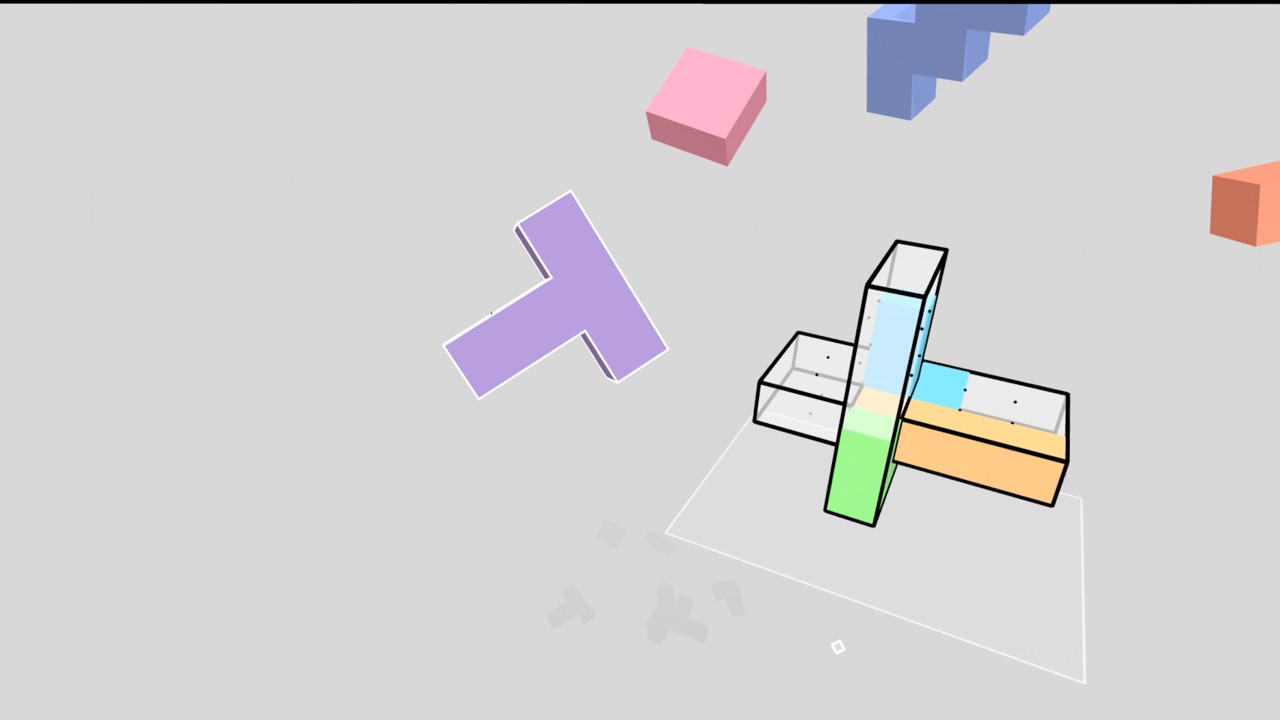

Look at the shadows in Cubism. Even the shadows are fading to grey as they get further away.

The shadows are also impying a ground plane! Not even the void is a void!

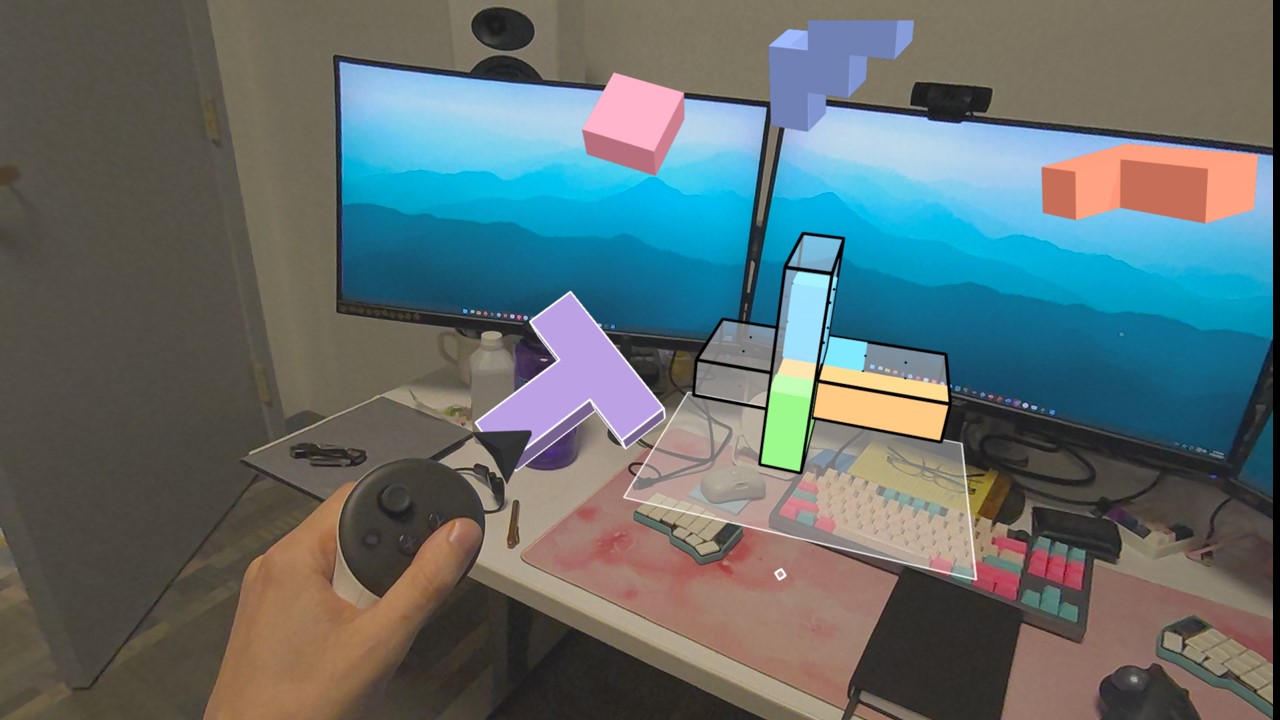

But you can also just do pass-through to get a lot of great depth cues for free…

I think the world is missing more VR storytelling not in environments, but in dollhouses and dioramas that float in front of me that I peer into? I want to do an agatha christie story in a dollhouse scaled environment that you spin around. Sorry, anyway…

Let’s watch a lot of these come together in Moss.

Quill the mouse is going to run around, and basically always be a little bit behind some leaf or bit of grass.

And we ahave all this foreground junk. As we transition to the next scene here, there’s going to be a leaf in my face blocking Quill, and I will naturally look around it. Moving my head, getting all that good parallax.

I started putting this together as the thesis, but it felt like most of these were squishy. Near & far, periphery & focused - we need to hit all those checkboxes… but really, you just want more!

Let’s jump out of vision to sound. So everyone, take out your headphones…. oh, wait.

We will skip the part where I go over how our ears work, and cut to the takeaways from that.

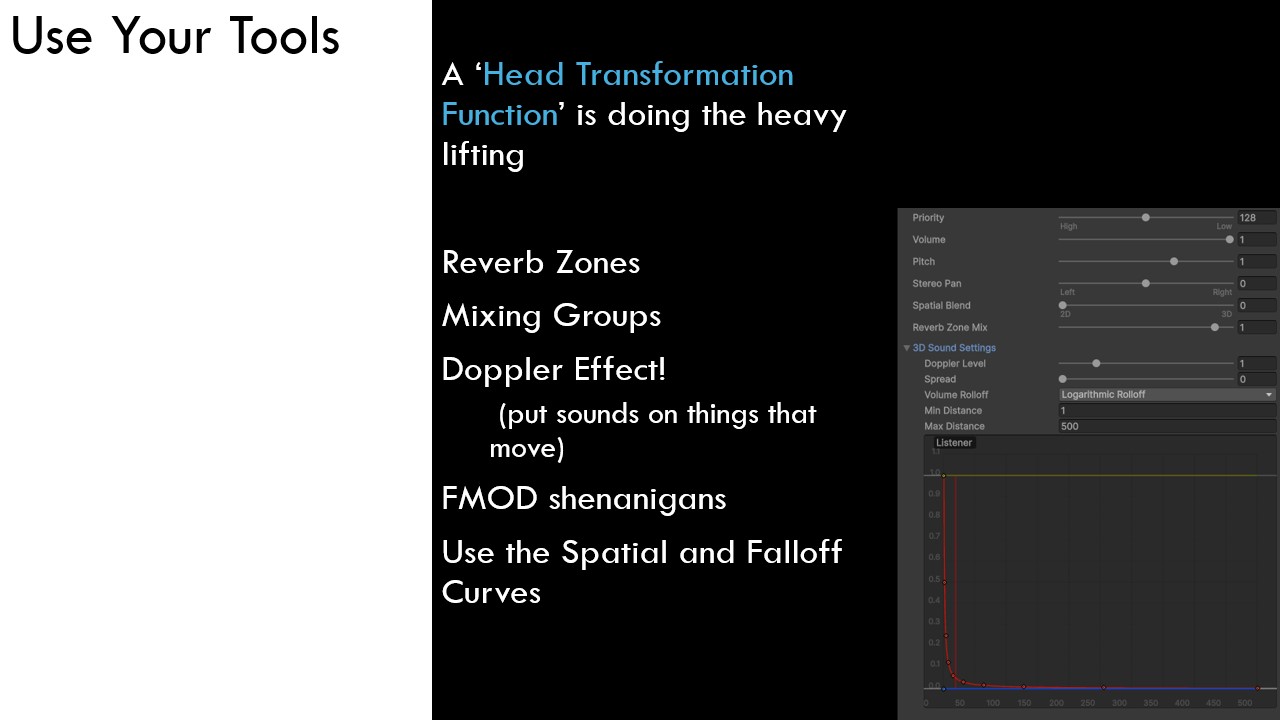

First step: we don’t get it for free! Just being in a 3D engine, we get no sound work for free.

We have to check some boxes and move some sliders. In Unity it’s the 2D/3D slider to enable the ‘head transformation function’, which is what simulates the spatialization of audio to make it sound like it’s ‘over there’

Lots of features, reverb zones are good; but you can also just fake it and add reverb to the elements yourself.

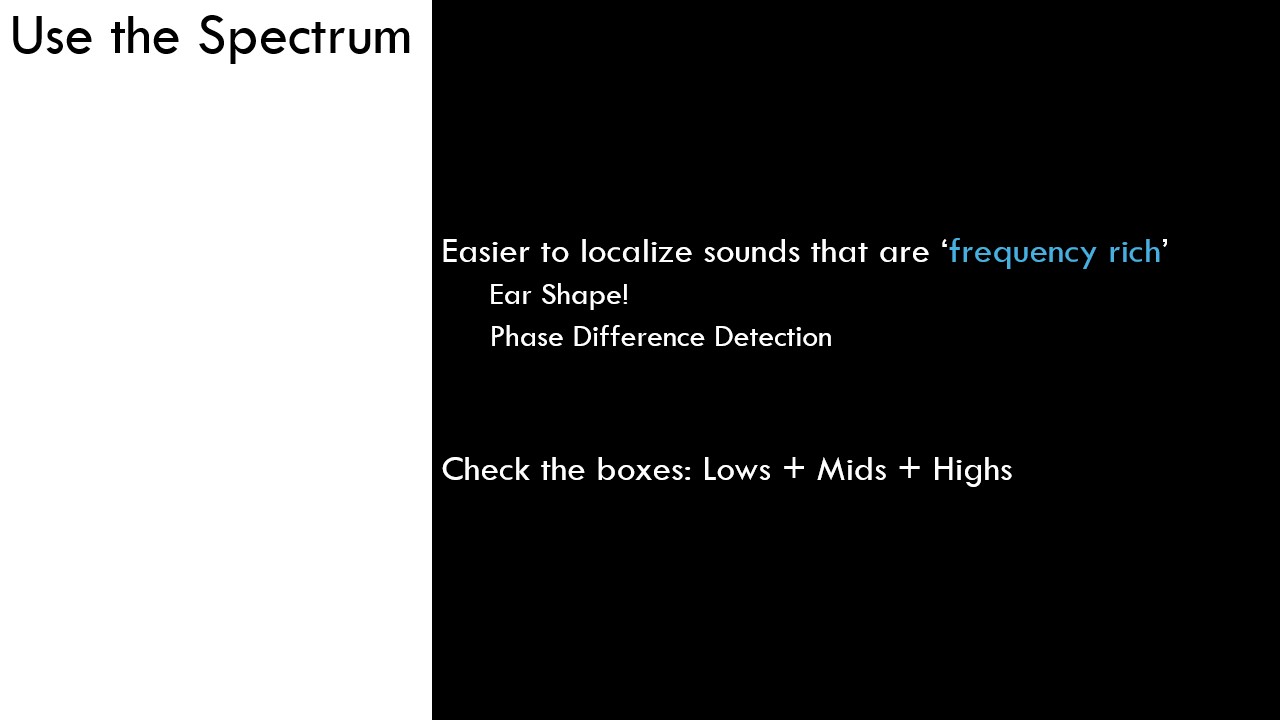

One of the cues we skipped is about how the ear shape affects different frequences differently from different directions.

So spatialization is harder on pure sine waves than one frequency rich chords.

Lows, mids, highs. Include them all.

You should make your audio be sourced from somewhere. THe alternative is like you’re wearing headphones.

Our brains are really good at separating headphone noise from spatial/reverbed/environment noise. When was the last time you mistook a voice in a podcast for someone standing near you? Was it NEVER?

Not spatializing audio is slapping headphones on your user and trying to convince them that those sounds are part of this environment. It’s not very easy.

But it can be powerful!

Back in undergrad, I was just this idiot who wanted to do all experimental… break all the rules; can’t be the same as everyone. My old professor constantly giving me the same note: ‘stop it!’

Now I’m the professor:

So, break these rules… but do it on purpose.

To conclude the audio section, you are doing your mix in 3D, and your users head is part of this mix.

That’s a challenge, and not super easy with familiar audio tools. You have to test the audio in headset too to get the effect. It’s time consuming.

Ambisonic things can be used too, but that’s not in scope of this talk.

More depth cues equals more better environments, because it makes for more understandable - by our reflexes and intuition and senses. That’s less understanding that the actual thinking has to do, so the user can stay engaged and caring about the story.

I know I’m low on time now, we’re at our conclusion.

Not including all the depth cues is like cooking without salt.

Or in my case, Cajun Power Spicy garlic peper sauce.

I put this on everything. I put this on ice cream, I don’t care.

Everything I said was tools, so let’s conclude by putting it together a bit.

All of this low-level sensory storytelling means VR is really powerful at one thing: and that’s establishing vibes.

Okay, obviously I mean aesthetics.

Your tools are depth cues and environments. But the objective is still storytelling.

So break any of the rules I’ve said this talk! Break them all!

Ignore anything I have said (if your project is cool as hell)

Make first person poems!

Signifier: Evil things will glow green. project: green glowing textures. Green dripping goop from pipes that make plink noises. Green tinted fog in the sewers. Etc.

we assign - through repetition or whatever - significance to perceptive elements.

This establishes a motif. In VR, Motif’s are from perceptive elements. Things we sense and the feeling from sensing them.

Realism should not be your default.

If you say ’this is realistic’, what you’re hiding is that it has no other reason for existing.

Realism as a goal doesn’t help artists make decisions.

And even worse is that it’s boring!

So go make music videos that are full of weird cool visual interest as your motivation, not just a sense of ‘I put the viewer somewhere real, isn’t that enough?’

(Apple Vision Pro does have a nature documentary that is great, so what do I know)

For inspiration, look around you! Here at SONA!

Little Nightmare VR: Altered Echoes Screenshot of the Reveal trailer

In general, Horror on VR is really doing better than most other genre’s. They understand the user contract - users do sign up to viserally and intensely perceive! And horror is so much about the mood and aesthetic.

Not for me… but good for inspiration.

I’m like 10 slides into my conclusion, but we’re finally sliding home.

You are a set designer! you are also a cinematographer! And you’re doing lighting! And Architecture! And interior design! And staging! And blocking! and everything! It’s so daunting, it’s so much!

But all these disparte influences and interdisceplenary opportunities are, for me as an artist, what’s so awesome about immersive and VR storytelling! I get to do all of that and make it cohesive and powerful.

Thank you